Global communication is entering a new era. Meta has introduced an AI-powered feature that automatically translates videos on Instagram and Facebook, going far beyond traditional subtitles.

This technology not only translates speech but also recreates the creator’s voice and synchronizes lip movements. The result is remarkably natural—videos appear as if they were originally recorded in another language.

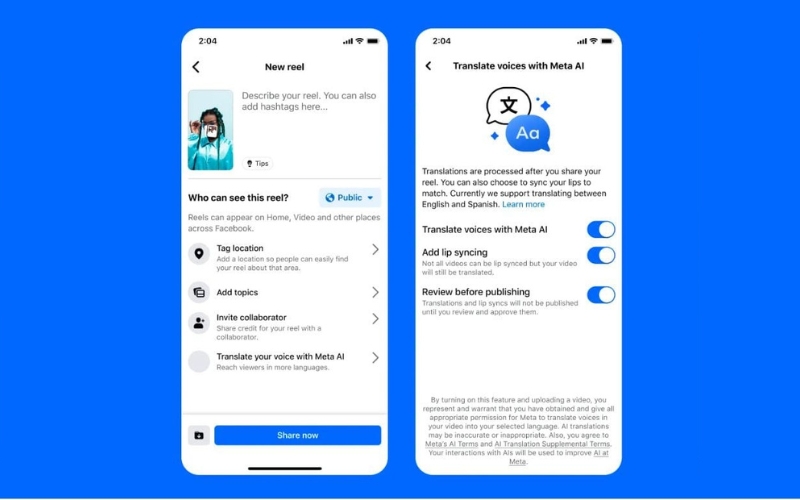

The process is straightforward. Creators can enable translation when publishing a video and select their target languages. The AI then transcribes, translates, and generates a new audio track that closely matches the original voice. In some cases, it also adjusts lip movements for better synchronization.

Currently, the feature supports languages such as English, Spanish, Portuguese, and Hindi, with ongoing expansion into additional markets. However, it is still being rolled out gradually, meaning access may be limited for some users.

The impact on content creators is substantial. A single video can now reach global audiences without the need for multiple recordings or high production costs. This opens new opportunities for growth and visibility.

Despite its innovation, the technology still has limitations. It performs best with clear audio and single speakers. Cultural nuances may not always translate accurately, and lip-syncing can occasionally feel unnatural.

From a strategic standpoint, this advancement does not eliminate the need for human translators. Instead, it shifts their role toward quality control, cultural adaptation, and high-level linguistic refinement.

We are moving toward a future where language is no longer a barrier. Content will become increasingly global, accessible, and driven by artificial intelligence.